Firmus: Inside the AI Factory Company That Is Rethinking How the World Runs AI at Scale

The global race to build AI infrastructure has a well-understood problem at its centre. Training and running the models that are reshaping industries requires an almost incomprehensible amount of electricity, water, and physical space. The data centres being built today at hyperscale, by companies spending hundreds of billions of dollars, are consuming power at rates that strain regional grids, drawing water in quantities that challenge local ecosystems, and operating at efficiency levels that are adequate for a world of relatively modest AI workloads.

The next generation of AI will not fit inside the infrastructure built for the last one. Firmus was incorporated in Australia in 2019 with a specific conviction: that the only way to build AI infrastructure that keeps pace with where AI is going is to design every layer of it, from the cooling system to the grid connection to the GPU orchestration software, from first principles. Six years later, with deployments running in Singapore, a flagship campus under construction in Tasmania, and $1.35 billion in equity raised over six months, Firmus is no longer making an argument. It is building a proof.

An Engineering Company Disguised as an Infrastructure Provider

The founding premise of Firmus is that most data centres are not actually designed for AI. They are general-purpose computing facilities with AI workloads dropped into them, which means the cooling is a retrofit, the power distribution is overbuilt for redundancy rather than optimised for density, the software governing the relationship between the GPU clusters and the physical environment is an afterthought, and the result is facilities that consume far more energy per unit of compute than the laws of physics require.

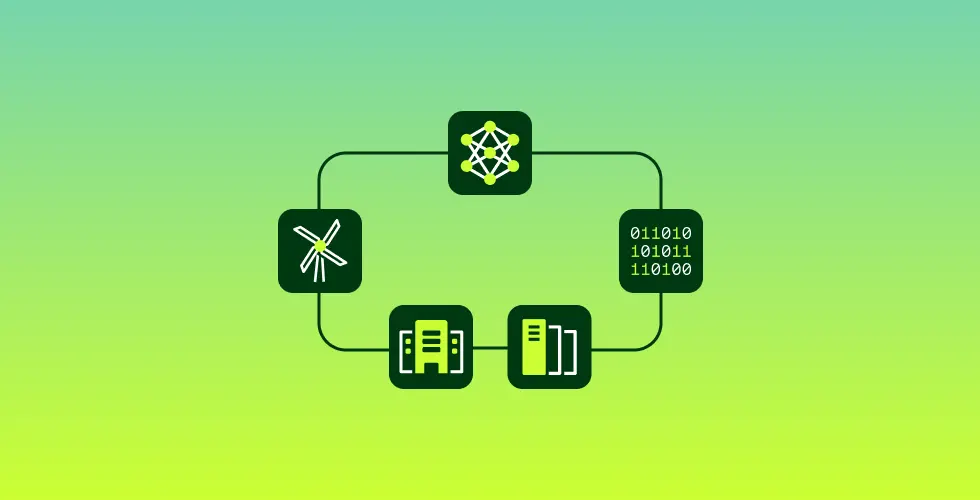

Firmus’s answer to this is a concept it calls the AI Factory, not a repurposed data centre, but a computing facility designed exclusively around the requirements of AI training and inference, built modularly, and operated as a unified physical and software system from day one.

The physical building block of a Firmus AI Factory is the HyperCube, a module housing 32 NVL racks in a primarily liquid-cooled, high-density configuration. Each HyperCube is designed for one output: AI compute. The cooling architecture requires no airflow and no retrofit tanks, running primarily on direct-to-chip liquid cooling that achieves a Power Usage Effectiveness approaching 1.0, the theoretical minimum at which almost no energy is wasted on infrastructure rather than computation.

The power distribution uses rack-level electrical with CDU-fed systems and no Power Distribution Unit overhead, eliminating one of the most common sources of efficiency loss in conventional data centre design. And the facility runs without water for more than 350 days per year, a radical departure from air-cooled or evaporative-cooled facilities that routinely consume millions of litres of water annually.

- <1.10 PUE at Project Southgate approaching theoretical minimum

- 99% Less water used versus traditional data centre cooling

- 36,000 GPUs planned at Southgate Tasmania Stage 1A and 1B combined

- 7+ Years of proprietary R&D embedded in every AI Factory build

AI FactoryOS: The Software That Governs Everything

Physical efficiency at the hardware level is necessary but not sufficient. The second major dimension of Firmus’s engineering differentiation is AI FactoryOS, its proprietary operating system for the entire AI Factory. Most data centre operators bolt observability and management software onto infrastructure that was not designed to be governed by it, producing systems where the cooling, the power distribution, the GPU clusters, and the grid connection are each managed by separate tools with limited ability to coordinate with each other.

AI FactoryOS governs all of them as a unified system: GPU telemetry, cooling control, thermal data, power management, and grid interaction are integrated into a single orchestration layer rather than managed in parallel. The result is a facility that can optimize across all of these variables simultaneously, reducing waste, improving uptime, and responding to real-time grid conditions in ways that a conventionally managed facility cannot.

This grid responsiveness is one of the more strategically significant capabilities Firmus has built. Its UPS systems are engineered to deliver Frequency Control Ancillary Services, or FCAS, load modulation, and firming capacity, meaning each Firmus AI Factory is not merely a consumer of grid power but a participant in the stability of the grid itself.

In regions like Tasmania, where Firmus’s flagship Project Southgate is being built on firmed Tasmanian hydro, wind, and solar power, this grid integration model turns the AI Factory from a source of strain on the energy system into a resource that helps balance it. For governments and grid operators evaluating where to site AI infrastructure, this is a genuinely differentiated value proposition.

“AI demand is accelerating faster than the infrastructure required to support it. Firmus is closing that gap with an energy-efficient AI Factory model purpose-built for next-generation compute at a global scale.” – Robert Yin, General Partner and Head of AI Infrastructure, Coatue

The Four Technical Pillars Separating Firmus From the Market

- Liquid-Everywhere Cooling: No airflow required. No retrofit immersion tanks. Direct-to-chip liquid cooling integrated from the ground up achieves PUE approaching 1.0 and operates without water for 350+ days annually, eliminating the two largest environmental liabilities of conventional AI data centres.

- AI FactoryOS Orchestration: A proprietary operating system governing GPU telemetry, cooling, power, and grid interaction as a single unified layer rather than separate tools bolted together. Enables real-time optimisation across all physical and compute variables simultaneously.

- NVIDIA Vera Rubin DSX Alignment: Firmus AI Factory infrastructure is built in alignment with NVIDIA’s Vera Rubin DSX reference design, ensuring the platform is architected for next-generation accelerated computing rather than retrofitted for it. NVIDIA participates as an investor in the latest round.

- Vertically Integrated Supply Chain: Unlike operators who procure and assemble components from the market, Firmus designs and controls its supply chain from chip to grid. This reduces deployment costs, accelerates build timelines, and ensures that efficiency gains at the component level are not lost at the system level.

Project Southgate: Australia’s Sovereign AI Infrastructure Initiative

The most concrete expression of Firmus’s engineering platform is Project Southgate, its long-term national AI infrastructure programme in Australia. The flagship site is in Launceston, Northern Tasmania, on industrial land previously used for heavy manufacturing, being repurposed into a purpose-built AI campus. Stage 1A and 1B are targeting 36,000 GPUs, powered entirely by firmed Tasmanian renewables, with a PUE below 1.10 and the near-total elimination of water consumption. Tasmania was selected precisely because it sits on an existing surplus of renewable energy capacity, primarily hydro, which means siting compute there does not strain the grid but rather activates underused generation capacity.

In March 2026, Firmus signed a multi-year agreement with a global hyperscale customer at Project Southgate, confirming that the programme has commercial demand from the tier of customers whose compute requirements are largest and whose standards for reliability, efficiency, and security are most demanding. The same month, Firmus announced a partnership with NVIDIA to build grid-integrated AI Factory software, deepening the technical relationship between Firmus’s FactoryOS platform and NVIDIA’s hardware ecosystem.

Southgate is also the foundation for Firmus’s stated ambition to export efficient AI compute from Australia to international markets, positioning the programme not only as sovereign infrastructure for Australian researchers, businesses, and government, but as a globally competitive AI compute exporter.

The Funding That Validates the Platform

In April 2026, Firmus announced a $505 million strategic equity investment led by Coatue, one of the most active global investors in AI and technology infrastructure, with NVIDIA participating subject to closing conditions. The round is expected to bring Firmus’s total equity raised to $1.35 billion over the preceding six months, at a post-money valuation of $5.5 billion.

This follows a $330 million close in September 2025 that saw NVIDIA join as an investor for the first time, and a $10 billion financing commitment secured in February 2026 led by Blackstone and Coatue to scale Firmus’s energy-efficient AI infrastructure at pace. The combined picture is of a company that has in six months assembled a capital base more commonly associated with hyperscale infrastructure operators than with companies founded seven years ago in Melbourne.

The capital is directed at accelerating the AI Factory rollout across Asia-Pacific, with Project Southgate providing the immediate deployment focus and Firmus’s existing Singapore operations, including two live sites in Loyang and at the Media Hub, providing a proven operational blueprint for regional expansion. Oliver Curtis, Co-Founder and Co-CEO of Firmus, framed the strategic logic directly: Project Southgate provides a strong foundation for exporting efficient AI compute globally, and the Singapore experience provides a proven blueprint for scaling across Southeast Asia.

The investor roster, which spans Coatue, Blackstone, and NVIDIA, signals conviction not only in the financial returns available from AI infrastructure but in Firmus’s specific engineering approach as the one most likely to be sustainable at the scale AI’s trajectory demands.

What Firmus Is Actually Competing For?

The AI infrastructure market is crowded with capital and not particularly crowded with original ideas. Most of the investment being deployed is financing variations on the same basic model: large air-cooled or partially liquid-cooled facilities, running standard commercial components, managed by conventional data centre operating software, and consuming energy and water at rates that are environmentally and operationally unsustainable as AI workloads grow. Firmus is competing for the customers who have understood that this model has a ceiling, and who are looking for infrastructure that can run the next generation of GPU clusters, not just the current one.

The SemiAnalysis ClusterMAX rankings, which evaluate GPU cloud performance on technical criteria rather than marketing claims, ranked Firmus in the top three globally for two consecutive years in 2024 and 2025. Its MLPerf certified benchmark results provide independently verified performance data.

The Cleantech Group placed Firmus in the APAC Top 25 for 2025. These are not awards for ambition. They are measurements of a platform that is operating at the performance levels its engineering is designed to produce. The $5.5 billion valuation the latest round implies is a bet that those performance levels, delivered at the scale Project Southgate and the broader Asia-Pacific rollout represent, will make Firmus one of the defining infrastructure companies of the AI era.