ScaleOps Automates Kubernetes and Slashes Cloud Costs: The Rise of the Fully Autonomous Cloud

The Cloud Cost Crisis in the Age of AI

Cloud computing has become the backbone of modern digital infrastructure, but with scale has come a growing problem: cost inefficiency. As organizations migrate workloads to Kubernetes and adopt AI-driven applications, cloud spending has surged, often without corresponding visibility or control.

Kubernetes, while powerful, introduces operational complexity that requires constant tuning. Teams must manually configure resource allocation, manage scaling policies, and ensure workloads perform reliably. This process is not only time-intensive but also prone to inefficiencies.

The rise of AI workloads has further intensified the issue. GPU infrastructure, essential for machine learning and model training, is among the most expensive resources in the cloud. Mismanagement of these resources can lead to substantial financial waste. This combination of complexity and cost has created demand for a new approach to cloud management.

ScaleOps and the Shift Toward Autonomous Cloud Infrastructure

ScaleOps is positioning itself at the center of this shift with an autonomous cloud platform designed to manage Kubernetes and AI infrastructure in real time. Rather than relying on manual configuration or static rules, the platform continuously analyzes application behavior and dynamically adjusts resource allocation.

The company’s vision extends beyond optimization tools. It aims to build what it describes as a Cloud Operating System for the AI era, where infrastructure management becomes largely self-governing.

This approach reflects a broader transformation in cloud computing, where automation is evolving from reactive assistance to proactive, autonomous decision-making.

How ScaleOps Automates Kubernetes Environments

At the core of ScaleOps’ platform is its ability to optimize Kubernetes environments without human intervention. The system monitors workloads continuously, using application-level context to determine how resources should be allocated. One of its key capabilities is automated pod rightsizing, which ensures that containers receive the appropriate amount of CPU and memory based on actual usage. This prevents over-provisioning, a common source of cloud waste.

The platform also includes replica optimization, which adjusts the number of running instances based on demand, and node optimization, which manages the underlying infrastructure supporting these workloads.

Smart pod placement further enhances efficiency by ensuring workloads are deployed on the most suitable nodes, taking into account performance requirements and cost considerations. These capabilities work together to create a system that continuously self-optimizes, reducing the need for manual intervention.

Managing AI and GPU Infrastructure at Scale

As AI adoption accelerates, managing GPU resources has become a critical challenge for enterprises. GPUs are not only expensive but also limited in availability, making efficient utilization essential.

ScaleOps extends its automation capabilities to GPU infrastructure, optimizing allocation based on workload requirements. The platform also supports model optimization and performance-aware observability, providing insights into how AI workloads are performing and where improvements can be made.

By applying real-time optimization to AI infrastructure, ScaleOps aims to address one of the most pressing challenges in modern cloud computing. This focus positions the company within a rapidly growing category of AI infrastructure management platforms.

Achieving Cost Reduction Through Continuous Optimization

One of the most notable claims associated with ScaleOps is its ability to reduce cloud costs by up to 80 percent. This level of efficiency is achieved through continuous, real-time optimization across multiple layers of infrastructure. The platform identifies unused or underutilized resources and reallocates them dynamically. It also leverages spot optimization strategies to take advantage of lower-cost compute options where appropriate.

Unlike traditional cost management tools that provide recommendations, ScaleOps executes changes automatically. This distinction is significant, as it removes the dependency on manual action and ensures that optimization is consistently applied. For organizations facing escalating cloud bills, this approach offers a potential pathway to more sustainable infrastructure spending.

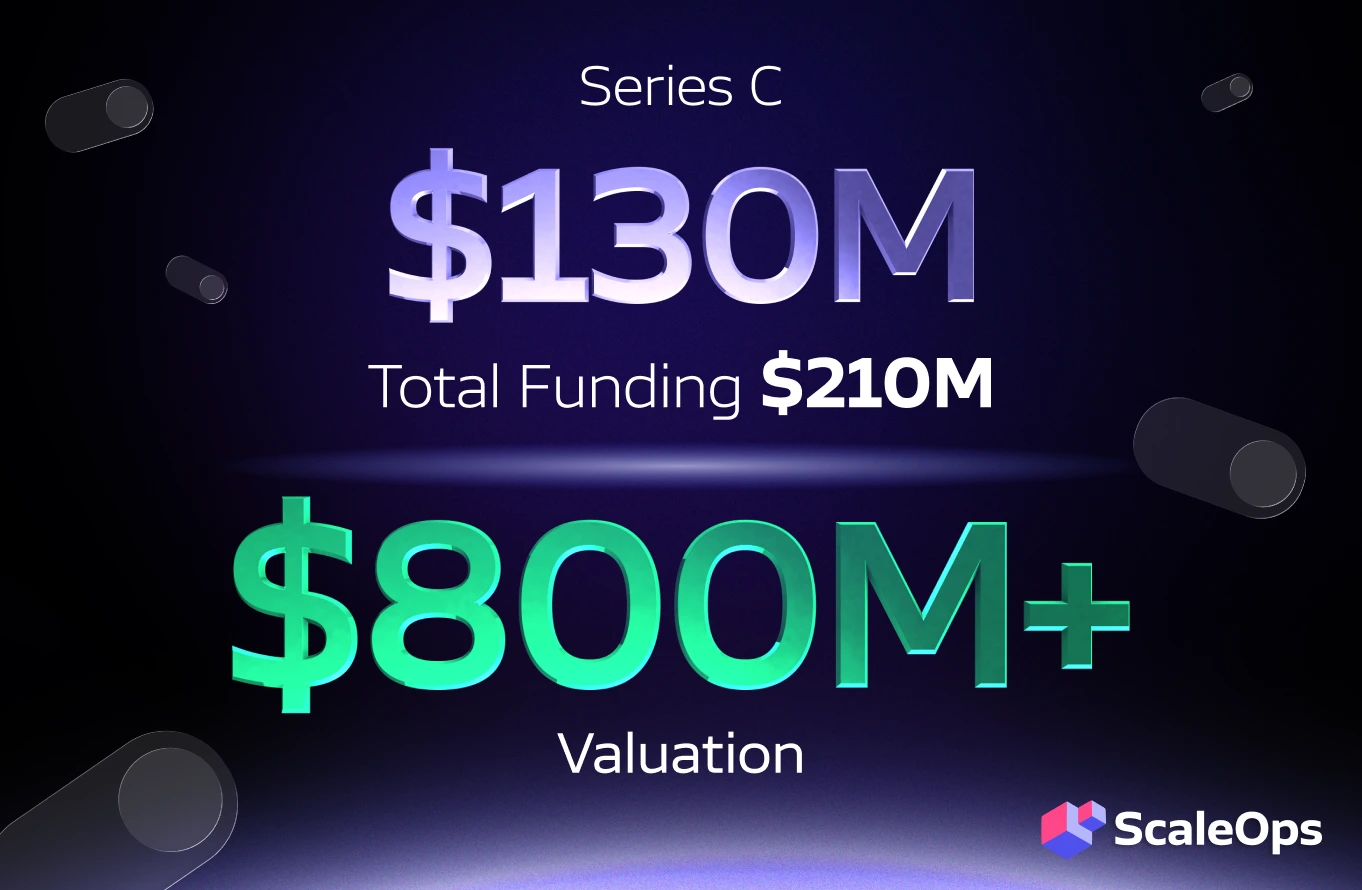

ScaleOps Raises $130 Million Series C to Expand Autonomous Cloud Vision

ScaleOps has recently raised $130 million in a Series C funding round, bringing its valuation to over $800 million. The funding reflects strong investor confidence in the company’s approach to cloud optimization and its broader vision for autonomous infrastructure.

The capital will be used to further develop the platform and expand its capabilities, particularly in the area of AI infrastructure management. As enterprises continue to scale their use of Kubernetes and AI workloads, demand for solutions that can simplify and optimize these environments is expected to grow.

The funding milestone also signals the emergence of autonomous cloud management as a distinct and increasingly important category within the cloud ecosystem.

From DevOps to Autonomous Operations

The rise of platforms like ScaleOps suggests a shift in how infrastructure is managed. Traditionally, DevOps teams have been responsible for configuring, monitoring, and optimizing cloud environments.

However, as systems become more complex, manual management is becoming less viable. Autonomous platforms offer an alternative, where routine tasks are handled by AI systems, allowing teams to focus on higher-level strategy and innovation.

This transition does not eliminate the need for human oversight but changes the nature of the role. Engineers move from direct control to supervision and governance of automated systems.

The Future of the Fully Autonomous Cloud

The concept of a fully autonomous cloud represents a significant evolution in infrastructure management. As cloud environments continue to grow in complexity, the ability to self-optimize in real time will become increasingly valuable.

ScaleOps’ approach demonstrates how artificial intelligence can be applied not just to applications but to the infrastructure that supports them. By integrating automation, observability, and optimization into a single platform, the company is contributing to a new model of cloud operations.

While the idea of a completely autonomous cloud is still evolving, the direction is clear. Organizations are moving toward systems that can manage themselves, adapt to changing conditions, and optimize performance without constant human intervention. Platforms like ScaleOps signal a fundamental shift in cloud computing, where autonomy, real-time optimization, and AI-driven decision-making could become the standard for managing increasingly complex and costly infrastructure.